We closed on a new piece of property last week and I’ve been exploring it on the ground and with Google Maps. There’s already a well-established non-motorized trail along two side of it, and based on the satellite imagery, it looks like there’s a partial trail approximately through the middle. I found it on the ground yesterday, and today I made an attempt at figuring out a way to connect the two trails. There’s still a foot of snow on the ground, so it’s wasn’t easy going, but I did snowshoe my way around. I’d hoped my snowshoe tracks would have hardened enough to walk it in boots this evening, but the snow had turned to sugar instead. Hopefully it’ll harden tonight when the temperature drops.

I’ve been on and off carrying my .22 rifle over the past couple months looking for grouse and snowshoe hare (hares?). I haven’t seen any grouse since I started carrying, but both last week and today I’ve seen hares. So far I’ve seen three on our property, and each time I saw them, I wasn’t carrying my rifle or my bow. The hare I saw this morning may have been laughing at me. Hares have a very clever strategy for eluding predators: when startled they run a short distance through the brush, freeze for ten to twenty seconds, then run again. For predators that are focused on movement, I think the momentary pause allows the hares to disappear due to their excellent camouflage. For a human hunter it’s a challenge because just as you get the animal in your sights, it bolts. And since you’re looking through your sights or scope, it’s much harder to pick them up after they’ve left the view. Anyway, the hare today was 20–30 feet away, in plain sight, and showed no sign that it considered me a threat. It kept right on eating alder shoots, preening, and at one point even got up on it’s hind legs and looked around. It would have been an easy target for my bow.

Had I been carrying it.

Today I went for a walk on the Creek with Nika and Piper and it occurred to me that the iPhone ought to be able to tell me not only where I am (which it does quite well with the Google-driven map application), but on whose property I’m walking through. It took me a little time, but I now have a webapp (http://swingleydev.com/gis/loc.html) that can do this (don't bother clicking on the link unless you're on an iPhone or other GPS-enabled device).

The result is a pair of pages. The first one shows you your current location, speed, and heading. With a press of a button (and a delay while the database finds the property owner) you can see the Borough information on the property you’re currently inside (assuming you’re in the Fairbanks North Star Borough—this page doesn’t do non-Borough residents any good).

Here’s how I set it up:

My web hosting provider doesn’t have a new enough version of PostgreSQL to run PostGIS, which means I need to use the spatially-enabled version of SQLite3, called Spatialite. To get the parcel database from the shapefile to Spatialite requires the following steps. Some parcels are eliminated in this process, but it’s a small fraction of the parcels in the database:

Download the parcels shapefile:

$ wget ftp://co.fairbanks.ak.us/GIS/tax_parcels.zip

Unzip it and insert it into a spatially enabled PostgreSQL database using ogr2ogr. Crash the process immediately:

$ unzip tax_parcels.zip

$ ogr2ogr -f "PostgreSQL"

-t_srs EPSG:4326

-overwrite -skipfailures

PG:"dbname='test'"

tax_parcels.shp

Fix the column definitions:

sql> DELETE FROM tax_parcels;

sql> ALTER TABLE tax_parcels ALTER COLUMN

sqft_calc TYPE numeric (22,12);

Re-import the data:

$ ogr2ogr -f "PostgreSQL"

-t_srs EPSG:4326

-append -skipfailures

PG:"dbname='test'"

tax_parcels.shp

Convert the geometry column to MULTIPOLYGON:

sql> ALTER TABLE tax_parcels DROP CONSTRAINT "enforce_geotype_wkb_geometry";

sql> ALTER TABLE tax_parcels ADD CONSTRAINT "enforce_geotype_wkb_geometry"

CHECK (geometrytype(wkb_geometry) = 'MULTIPOLYGON'::text OR

wkb_geometry IS NULL OR

geometrytype(wkb_geometry) = 'POLYGON'::text);

sql> UPDATE tax_parcels SET wkb_geometry = ST_Multi(wkb_geometry);

sql> ALTER TABLE tax_parcels DROP CONSTRAINT "enforce_geotype_wkb_geometry";

sql> ALTER TABLE tax_parcels ADD CONSTRAINT "enforce_geotype_wkb_geometry"

CHECK (geometrytype(wkb_geometry) = 'MULTIPOLYGON'::text OR

wkb_geometry IS NULL);

sql> UPDATE geometry_columns SET type='MULTIPOLYGON'

WHERE f_table_name='tax_parcels' AND f_geometry_column='wkb_geometry';

Re-import the data (there will be thousands of errors, but this insert should add the MULTIPOLYGON rows that weren’t inserted the first time around):

$ ogr2ogr -f "PostgreSQL"

-t_srs EPSG:4326

-append -skipfailures

PG:"dbname='test'"

tax_parcels.shp

Get rid of the illegal polygons:

sql> DELETE FROM tax_parcels WHERE NOT ST_IsValid(wkb_geometry);

Convert to spatialite:

$ ogr2ogr -f "SQLite"

tax_parcels.sqlite

PG:"dbname='test'"

-dsco SPATIALITE=YES

With the data in the proper format, I built a mobile web page that can pull location information from the mobile device, display it on the screen, and pass this information to a page that finds and displays the parcel information from the Spatialite database. JavaScript handles gathering the location information, but because I don’t control the web server, I had to write a CGI script to pass a query to spatialite and format the results as a list. This CGI is called by the main page from a <form> element. The spatialite query looks like this:

sqlite> SELECT street_add, owner_firs, owner_last,

owner1, owner2, owner3, mail_add, ci_st_zip,

round(acres_calc, 1) as acres, ’$’ || total as total,

’$’ || land as land, ’$’ || improvemen as improvements,

pan, sub, block, lot, road_water, lot_size, units,

neighborho, primary_us, tax_status, tax_year, mill_rate,

business, year_built, situs_numb, situs_name

FROM tax_parcels

WHERE Intersects(GEOMETRY, SetSRID(MakePoint(lon, lat), 4326));

Again, implementing this would be a lot easier if I could install PostGIS on the server and use PHP to access the data directly. Because I can’t, spatialite and CGI do the trick.

Update: I added a few more steps to convert the initially imported POLYGON layers to MULTIPOLYGON, which then allows us to include the MULTIPOLYGON rows from the shapefile.

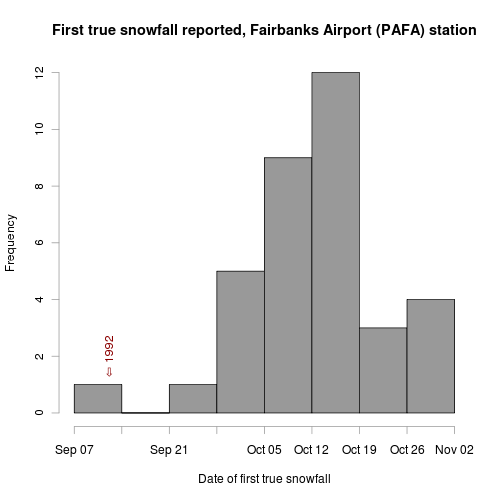

On Wednesday I reported the results of my analysis examining the average date of first snow recorded at the Fairbanks Airport weather station. It was based on the snow_flag boolean field in the ISD database. In that post I mentioned that examining snow depth data might show the date on which permanent snow (snow that lasts all winter) first falls in Fairbanks. I’m calling this the first “true” snowfall of the season.

For this analysis I looked at the snow depth field in the ISD database for the Fairbanks station. The data was present for the years between 1973 and 1999, but isn’t in the database before that date. I’m not sure why it’s not in there after 1999, but luckily I’ve been collecting and archiving the data in the Fairbanks Daily Climate Summary (which includes a snow depth measurement) since late 2000. Combining those two data sets, I’ve got data for 27 years.

The SQL query I came up with to get the data from the data sets is a good estimate of what we’re interested in, but isn’t perfect because it only finds the date of first snow that lasts at least a week. In a place like Fairbanks where the turn to winter is so rapid and so dependent on the high albedo of snow cover, I think it’s close enough to the truth. Unfortunately, the query is brutally slow because it involves six (!) inner self-joins. The idea is to join the table containing snow depth data against itself, incrementing the date by one day at each join. The result set before the WHERE statement is the data for each date, plus the data for the six days following that date. The WHERE clause requires that snow depth on all those seven dates is above zero. This large query is a subquery of the main query which selects the earliest date found in each year.

There must be a better way to deal with conditions like this where we’re interested in the consecutive nature of the phenomenon, but I couldn’t figure out any other way to handle it in SQL, so here it is:

SELECT year, min(date) FROM

(

SELECT extract(year from a.dt) AS year,

to_char(extract(month from a.dt), '00') ||

'-' ||

ltrim(to_char(extract(day from a.dt), '00')) AS date

FROM isd_daily AS a

INNER JOIN isd_daily AS b

ON a.isd_id=b.isd_id AND

a.dt=b.dt - interval '1 day'

INNER JOIN isd_daily AS c

ON a.isd_id=c.isd_id AND

a.dt=c.dt - interval '2 days'

INNER JOIN isd_daily AS d

ON a.isd_id=d.isd_id AND

a.dt=d.dt - interval '3 day'

INNER JOIN isd_daily AS e

ON a.isd_id=e.isd_id AND

a.dt=e.dt - interval '4 day'

INNER JOIN isd_daily AS f

ON a.isd_id=f.isd_id AND

a.dt=f.dt - interval '5 day'

INNER JOIN isd_daily AS g

ON a.isd_id=g.isd_id AND

a.dt=g.dt - interval '6 day'

WHERE a.isd_id = '702610-26411' AND

a.snow_depth > 0 AND

b.snow_depth > 0 AND

c.snow_depth > 0 AND

d.snow_depth > 0 AND

e.snow_depth > 0 AND

f.snow_depth > 0 AND

g.snow_depth > 0 AND

extract(month from a.dt) > 7

) AS snow_depth_conseq

GROUP BY year

ORDER BY year;

See what I mean? It’s pretty ugly. Running the result through the same R script as in my previous snowfall post yields this plot:

Between 1973 and 2008 we’ve gotten snow lasting the whole winter starting as early as September 12th (that was the infamous 1992), and as late as the first of November (1976). The median date is October 13th, which matches my impression. Now that the leaves have largely fallen off the trees, I’m hoping we get our first true snowfall on the early end of the distribution. We’ve still got a few things to take care of (a couple new dog houses, insulating the repaired septic line, etc.), but once those are done, I’m ready for the Creek to freeze and snow to blanket the trails.

Another gorgeous day in Fairbanks. September is one of my favorite months. The bugs are just about gone, it’s cold and crisp in the morning, and there’s usually an abundance of sun and blue skies. This year has been no exception so far, making it hard to stay in the house. I’ve been out on several excellent walks with the dogs on the trails around our house.

We’re also in the process of getting our road and driveway fixed. We’ve been living here for two years, and both have deteriorated since we moved in. The road is a tough one because so much water runs over it during spring breakup, and I’ve been told that no matter what you put on the road, it will just wash away in a few years.

Still, we had to do something, so we changed the pattern a bit this year, installing a culvert in a particularly bad place, and filling one of the deep spots with very large rocks instead of mine tailings. The hope is that they won’t get carried away in the spring flooding, and may drain well enough that water will cross there instead of running down the road. Time will tell, but at the very least, it should be a much nicer drive this winter, and will be a lot easier for me to keep it plowed.

One of the common destinations on my walks is this pond, on the property next to us. This is the same pond that I photographed and wrote about last April when I went ice skating on it. Once the Creek freezes, I like to walk upstream to this pond, cross it, and return home on the mushing trails. It’s a nice spot.

Fermentation, day one

Yesterday I brewed my ninth batch of Piper’s Irish-American Red Ale, based on a recipe from Jeff Renner posted in the Homebrew Digest. It’s an easy drinking, low alcohol (4.0—4.7%) red ale. My version isn’t a traditional Irish Red Ale because it’s got six-row malt and corn in it, but this is likely to have been the combination of grains used by early Irish immigrants to the United States. When the English first colonized North America, two-row barley for brewing was imported from Britain. Six-row barley grew much better in our climate, but it is higher in protein than two-row barley, which results in a cloudy beer, and one which spoils more readily (especially without refrigeration). The solution to this problem is to replace some of the six-row barley starches with other types of starch like corn. The high-protein barley will readily convert the starches in the corn to the simple sugars that yeast can consume, and the total amount of protein in the final product will be reduced. It was a great adaptation to the native strain of barley grown in early America.

We don’t have to worry about that now, of course, but I enjoy renewing some older brewing traditions. This batch is a little over 40% British two-row pale malt, 30% American six-row malted barley, and 20% flaked maize. There’s some flaked barley for head retention, and crystal 60 and chocolate malt to produce the malt flavor and color of a red ale.

Andrea’s photo of Piper, The Toothbrush Dog

I was trying out a new transfer pump on this batch, as my previous one was damaged by ice the last time I brewed. I’d left my water supply barrel outside overnight and when the very cold water hit the pump, it froze. The other issue with the old pump was that it would shut down when the outlet flow was constricted too much, a problem during chilling because I use a ball valve to limit the flow of cold water into the plate chiller. The new pump screamed right through the constricted flow and I got the wort from boiling to 64°F without any problems this time. I would have preferred a pitching temperature closer to 68°F, but I’d made a yeast starter, and the low wort temperature didn’t seem to slow it down at all. This morning there was a thick head of yeasty foam on the surface (as you can see in the photo).

While I was brewing, Andrea took some of the dogs to Mush for Kids where Piper was the Toothbrush Dog. She walked around with a backpack filled with toothbrushes for the kids who went to the event. Check out Andrea’s blog for more details and photos.