I’m writing this blog post on May 1st, looking outside as the snow continues to fall. We’ve gotten three inches in the last day and a half, and I even skied to work yesterday. It’s still winter here in Fairbanks.

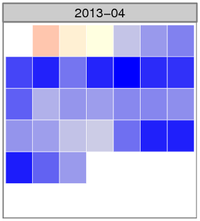

The image shows the normalized temperature anomaly calendar heatmap for April. The bluer the squares are, the colder that day was compared with the 30-year climate normal daily temperature for Fairbanks. There were several days where the temperature was more than three standard deviations colder than the mean anomaly (zero), something that happens very infrequently.

Here are the top ten coldest average April temperatures for the Fairbanks Airport Station.

| Rank | Year | Average temp (°F) | Rank | Year | Average temp (°F) |

|---|---|---|---|---|---|

| 1 | 1924 | 14.8 | 6 | 1972 | 20.8 |

| 2 | 1911 | 17.4 | 7 | 1955 | 21.6 |

| 3 | 2013 | 18.2 | 8 | 1910 | 22.9 |

| 4 | 1927 | 19.5 | 9 | 1948 | 23.2 |

| 5 | 1985 | 20.7 | 10 | 2002 | 23.2 |

The averages come from the Global Historical Climate Network - Daily data set, with some fairly dubious additions to extend the Fairbanks record back before the 1956 start of the current station. Here’s the query to get the historical data:

SELECT rank() OVER (ORDER BY tavg) AS rank,

year, round(c_to_f(tavg), 1) AS tavg

FROM (

SELECT year, avg(tavg) AS tavg

FROM (

SELECT extract(year from dte) AS year,

dte, (tmin + tmax) / 2.0 AS tavg

FROM (

SELECT dte,

sum(CASE WHEN variable = 'TMIN'

THEN raw_value * 0.1

ELSE 0 END) AS tmin,

sum(CASE WHEN variable = 'TMAX'

THEN raw_value * 0.1

ELSE 0 END) AS tmax

FROM ghcnd_obs

WHERE variable IN ('TMIN', 'TMAX')

AND station_id = 'USW00026411'

AND extract(month from dte) = 4 GROUP BY dte

) AS foo

) AS bar GROUP BY year

) AS foobie

ORDER BY rank;

And the way I calculated the average temperature for this April. pafg is a text file that includes the data from each day’s National Weather Service Daily Climate Summary. Average daily temperature is in column 9.

$ tail -n 30 pafg | \

awk 'BEGIN {sum = 0; n = 0}; {n = n + 1; sum += $9} END { print sum / n; }'

18.1667

Cold November

Several years ago I showed some R code to make a heatmap showing the rank of the Oakland A’s players for various hitting and pitching statistics.

Last week I used this same style of plot to make a new weather visualization on my web site: a calendar heatmap of the difference between daily average temperature and the “climate normal” daily temperature for all dates in the last ten years. “Climate normals” are generated every ten years and are the averages for a variety of statistics for the previous 30-year period, currently 1981—2010.

A calendar heatmap looks like a normal calendar, except that each date box is colored according to the statistic of interest, in this case the difference in temperature between the temperature on that date and the climate normal temperature for that date. I also created a normalized version based on the standard deviations of temperature on each date.

Here’s the temperature anomaly plot showing all the temperature differences for the last ten years:

It’s a pretty incredible way to look at a lot of data at the same time, and it makes it really easy to pick out anomalous events such as the cold November and December of 2012. One thing you can see in this plot is that the more dramatic temperature differences are always in the winter; summer anomalies are generally smaller. This is because the range of likely temperatures is much larger in winter, and in order to equalize that difference, we need to normalize the anomalies by this range.

One way to do that is to divide the actual temperature difference by the standard deviation of the 30-year climate normal mean temperature. Because of the nature of the distribution standard deviations are based on, approximately 66% of the variation occurrs within -1 and 1 standard deviation, 95% between -2 and 2, and 99% between -3 and 3 standard deviations. That means that deep red or blue dates, those outside of -3 and 3, in the normalized calendar plot are fairly rare occurrances.

Here’s the normalized anomalies for the last twelve months:

The tricky part in generating either of these plots is getting the temperature data into the right format. The plots are faceted by month and year (or YYYYY-MM in the twelve month plot), so each record needs to have month and year. That part is easy. Each individual plot is a single calendar month, and is organized by day of the week along the x-axis, and the inverse of week number along the y-axis (the first week in a month is at the top of the plot, the last at the bottom).

Here’s how to get the data formatted properly:

library(lubridate)

cal <- function(dt) {

# Reads a date object and returns a tuple (weekrow, daycol)

# where weekrow starts at 1 and daycol starts at 1 for Sunday

year <- year(dt)

month <- month(dt)

day <- day(dt)

wday_first <- wday(ymd(paste(year, month, 1, sep = '-'), quiet = TRUE))

offset <- 7 + (wday_first - 2)

weekrow <- ((day + offset) %/% 7) - 1

daycol <- (day + offset) %% 7

c(weekrow, daycol)

}

weekrow <- function(dt) {

cal(dt)[1]

}

daycol <- function(dt) {

cal(dt)[2]

}

vweekrow <- function(dts) {

sapply(dts, weekrow)

}

vdaycol <- function(dts) {

sapply(dts, daycol)

}

pafg$temp_anomaly <- pafg$mean_temp - pafg$average_mean_temp

pafg$month <- month(pafg$dt, label = TRUE, abbr = TRUE)

pafg$year <- year(pafg$dt)

pafg$weekrow <- factor(vweekrow(pafg$dt),

levels = c(5, 4, 3, 2, 1, 0),

labels = c('6', '5', '4', '3', '2', '1'))

pafg$daycol <- factor(vdaycol(pafg$dt),

labels = c('u', 'm', 't', 'w', 'r', 'f', 's'))

And the plotting code:

library(ggplot2)

library(scales)

library(grid)

svg('temp_anomaly_heatmap.svg', width = 11, height = 10)

q <- ggplot(data = subset(pafg, year > max(pafg$year) - 11),

aes(x = daycol, y = weekrow, fill = temp_anomaly)) +

theme_bw() +

theme(axis.text.x = element_blank(),

axis.text.y = element_blank(),

panel.grid.major = element_blank(),

panel.grid.minor = element_blank(),

axis.ticks.x = element_blank(),

axis.ticks.y = element_blank(),

axis.title.x = element_blank(),

axis.title.y = element_blank(),

legend.position = "bottom",

legend.key.width = unit(1, "in"),

legend.margin = unit(0, "in")) +

geom_tile(colour = "white") +

facet_grid(year ~ month) +

scale_fill_gradient2(name = "Temperature anomaly (°F)",

low = 'blue', mid = 'lightyellow', high = 'red',

breaks = pretty_breaks(n = 10)) +

ggtitle("Difference between daily mean temperature\

and 30-year average mean temperature")

print(q)

dev.off()

You can find the current versions of the temperature and normalized anomaly plots at:

Early-season ski from work

Yesterday a co-worker and I were talking about how we weren’t able to enjoy the new snow because the weather had turned cold as soon as the snow stopped falling. Along the way, she mentioned that it seemed to her that the really cold winter weather was coming later and later each year. She mentioned years past when it was bitter cold by Halloween.

The first question to ask before trying to determine if there has been a change in the date of the first cold snap is what qualifies as “cold.” My officemate said that she and her friends had a contest to guess the first date when the temperature didn’t rise above -20°F. So I started there, looking for the month and day of the winter when the maximum daily temperature was below -20°F.

I’m using the GHCN-Daily dataset from NCDC, which includes daily minimum and maximum temperatures, along with other variables collected at each station in the database.

When I brought in the data for the Fairbanks Airport, which has data available from 1948 to the present, there was absolutely no relationship between the first -20°F or colder daily maximum and year.

However, when I changed the definition of “cold” to the first date when the daily minimum temperature is below -40, I got a weak (but not statistically significant) positive trend between date and year.

The SQL query looks like this:

SELECT year, water_year, water_doy, mmdd, temp

FROM (

SELECT year, water_year, water_doy, mmdd, temp,

row_number() OVER (PARTITION BY water_year ORDER BY water_doy) AS rank

FROM (

SELECT extract(year from dte) AS year,

extract(year from dte + interval '92 days') AS water_year,

extract(doy from dte + interval '92 days') AS water_doy,

to_char(dte, 'mm-dd') AS mmdd,

sum(CASE WHEN variable = 'TMIN'

THEN raw_value * raw_multiplier

ELSE NULL END

) AS temp

FROM ghcnd_obs

INNER JOIN ghcnd_variables USING(variable)

WHERE station_id = 'USW00026411'

GROUP BY extract(year from dte),

extract(year from dte + interval '92 days'),

extract(doy from dte + interval '92 days'),

to_char(dte, 'mm-dd')

ORDER BY water_year, water_doy

) AS foo

WHERE temp < -40 AND temp > -80

) AS bar

WHERE rank = 1

ORDER BY water_year;

I used “water year” instead of the actual year because the winter is split between two years. The water year starts on October 1st (we’re in the 2013 water year right now, for example), which converts a split winter (winter of 2012/2013) into a single year (2013, in this case). To get the water year, you add 92 days (the sum of the days in October, November and December) to the date and use that as the year.

Here’s what it looks like (click on the image to view a PDF version):

The dots are the observed date of first -40° daily minimum temperature for each water year, and the blue line shows a linear regression model fitted to the data (with 95% confidence intervals in grey). Despite the scatter, you can see a slightly positive slope, which would indicate that colder temperatures in Fairbanks are coming later now, than they were in the past.

As mentioned, however, our eyes often deceive us, so we need to look at the regression model to see if the visible relationship is significant. Here’s the R lm results:

Call:

lm(formula = water_doy ~ water_year, data = first_cold)

Residuals:

Min 1Q Median 3Q Max

-45.264 -15.147 -1.409 13.387 70.282

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) -365.3713 330.4598 -1.106 0.274

water_year 0.2270 0.1669 1.360 0.180

Residual standard error: 23.7 on 54 degrees of freedom

Multiple R-squared: 0.0331, Adjusted R-squared: 0.01519

F-statistic: 1.848 on 1 and 54 DF, p-value: 0.1796

The first thing to check in the model summary is the p-value for the entire model on the last line of the results. It’s only 0.1796, which means that there’s an 18% chance of getting these results simply by chance. Typically, we’d like this to be below 5% before we’d consider the model to be valid.

You’ll also notice that the coefficient of the independent variable (water_year) is positive (0.2270), which means the model predicts that the earliest cold snap is 0.2 days later every year, but that this value is not significantly different from zero (a p-value of 0.180).

Still, this seems like a relationship worth watching and investigating further. It might be interesting to look at other definitions of “cold,” such as requiring three (or more) consecutive days of -40° temperatures before including that period as the earliest cold snap. I have a sense that this might reduce the year to year variation in the date seen with the definition used here.

After writing my last blog post I decided I really should update the code so I can control the temperature thresholds without having to rebuild and upload the code to the Arduino. That isn’t a big issue when I have easy access to the board and a suitable computer nearby, but doing this over the network is dangerous because I could brick the board and wouldn’t be able to disconnect the power or press the reset button. Also, the default position of the transistor is off, which means that bad code loaded onto the Arduino shuts off the fan.

But I will have a serial port connection through the USB port on the server (which is how I will monitor the status of the setup), and I can send commands at the same time I’m receiving data. Updating the code to handle simple one-character messages is pretty easy.

First, I needed to add a pair of variables to store the thresholds, and initialize them to their defaults. I also replaced the hard-coded values in the temperature difference comparison section of the program with these variables.

int temp_diff_full = 6;

int temp_diff_half = 2;

Then, inside the main loop, I added this:

// Check for inputs

while (Serial.available()) {

int ser = Serial.read();

switch (ser) {

case 70: // F

temp_diff_full = temp_diff_full + 1;

Serial.print("full speed = ");

Serial.println(temp_diff_full);

break;

case 102: // f

temp_diff_full = temp_diff_full - 1;

Serial.print("full speed = ");

Serial.println(temp_diff_full);

break;

case 72: // H

temp_diff_half = temp_diff_half + 1;

Serial.print("half speed = ");

Serial.println(temp_diff_half);

break;

case 104: // h

temp_diff_half = temp_diff_half - 1;

Serial.print("half speed = ");

Serial.println(temp_diff_half);

break;

case 100: // d

temp_diff_full = 6;

temp_diff_half = 2;

Serial.print("full speed = ");

Serial.println(temp_diff_full);

Serial.print("half speed = ");

Serial.println(temp_diff_half);

break;

}

}

This checks to see if there’s any data in the serial buffer, and if there is, it reads it one character at a time. Raising and lowering the full speed threshold by one degree can be done by sending F to raise and f to lower; the half speed thresholds are changed with H and h, and sending d will return the values back to 6 and 2.

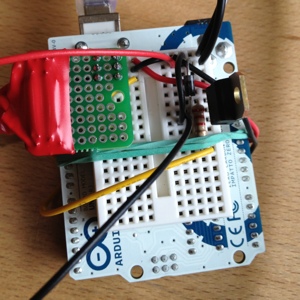

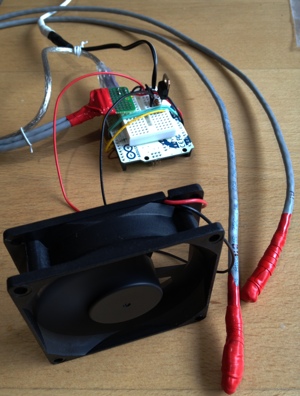

One of our servers at work has a power supply that is cooled with two small, loud fans—loud enough that the noise is annoying for the occupants of the office suite shared by the server. What I’d like to do is muffle the sound by enclosing the rear of the server (or potentially just the power supply exhaust) with an actively vented box. Since the fan on the box will be larger, it should be able to move the same amount of air with less noise.

But messing around with the way servers cool themselves is tricky, and since the server isn’t easily accessible, I’d like to be able to monitor what’s going on until I confirm my setup is able to keep the server cool.

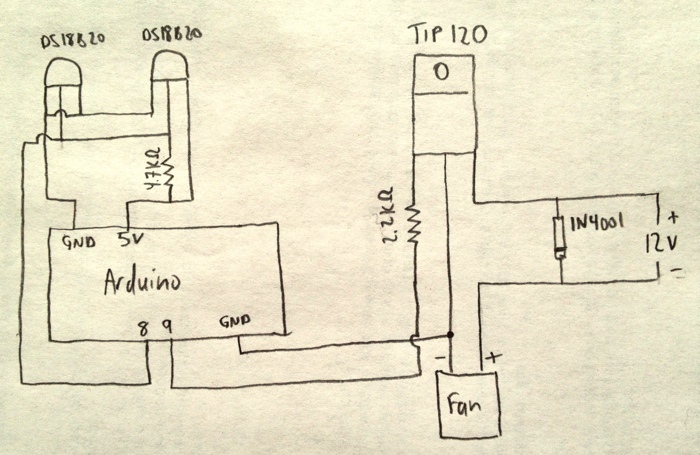

I’ve built quite a few temperature monitoring circuits, but in this project I’ll need to be able to control the speed of the vent fan based on the temperature differential inside and outside the box. This is complicated because the Arduino runs on 5 volts and the fan I’m starting with requires 12 volts. I’ll use a Darlington transistor, which can be triggered using the low power available from an Arduino pin, but which can carry the voltage and current of the fan. For higher voltages and currents, or for controlling devices that run on alternating current, I’d need to use some form of relay.

To measure the temperature differential between the inside and outside of the enclosure, I’m using two DS18B20 temperature sensors, each wired to the end of a length of Cat5e cable (and wrapped in silicone “Rescue Tape” as an experiment). The transistor is a TIP120, and a 1N4001 diode across the positive and negative leads of the fan protect it from reverse voltages when the fan is turned off but is still spinning. A 2.2KΩ resistor protects the trigger pin on the Arduino.

Here’s the diagram:

The Arduino is currently programmed to turn the fan on full speed when the temperature from the sensor inside the box is more than six degrees higher than the temperature outside the box. The fan runs at half speed until there’s less than a two degree differential, at which point the fan shuts off. These targets are hard-coded in the software, but it wouldn’t be too difficult to change the code to read from the serial buffer so that the thresholds could be changed while it’s running.

Here’s the setup code:

#include <OneWire.h>

#include <DallasTemperature.h>

#define ONE_WIRE_BUS 8

#define TEMPERATURE_PRECISION 12

#define fanPin 9

OneWire oneWire(ONE_WIRE_BUS);

DallasTemperature sensors(&oneWire);

DeviceAddress thermOne = { 0x28,0x7A,0xBA,0x0A,0x02,0x00,0x00,0xD1 };

DeviceAddress thermTwo = { 0x28,0x8B,0xFD,0x0A,0x02,0x00,0x00,0x96 };

void setup() {

Serial.begin(9600);

pinMode(fanPin, OUTPUT);

digitalWrite(fanPin, LOW);

sensors.begin();

sensors.setResolution(thermOne, TEMPERATURE_PRECISION);

sensors.setResolution(thermTwo, TEMPERATURE_PRECISION);

}

void printTemperature(DeviceAddress deviceAddress) {

float tempC = sensors.getTempC(deviceAddress);

Serial.print(DallasTemperature::toFahrenheit(tempC));

}

And the loop that does the real work:

void loop() {

sensors.requestTemperatures();

printTemperature(thermOne);

Serial.print(",");

printTemperature(thermTwo);

Serial.print(",");

float thermOneF = DallasTemperature::toFahrenheit(sensors.getTempC(thermOne));

float thermTwoF = DallasTemperature::toFahrenheit(sensors.getTempC(thermTwo));

float diff = thermOneF - thermTwoF;

Serial.print(diff);

Serial.print(",");

if ((diff) > 6.0) {

Serial.println("high");

digitalWrite(fanPin, HIGH);

} else if (diff > 2.0) {

Serial.println("med");

analogWrite(fanPin, 127);

} else {

Serial.println("off");

digitalWrite(fanPin, LOW);

}

delay(10000);

}

When I’m turning the fan off or on, I’m using digitalWrite, but when running the fan at half-speed, because I’m using a pulse-width-modulation (PWM) digital pin, I can set the pin to a value between 0 (off all the time) and 255 (on all the time) and cause the fan to run at whatever speed I want. I’m also dumping the temperatures and fan speed setting to the serial port so I can read those values later and evaluate how well the setup is working.